OVERVIEW

Amazon Camera Modality

2016 — 2018

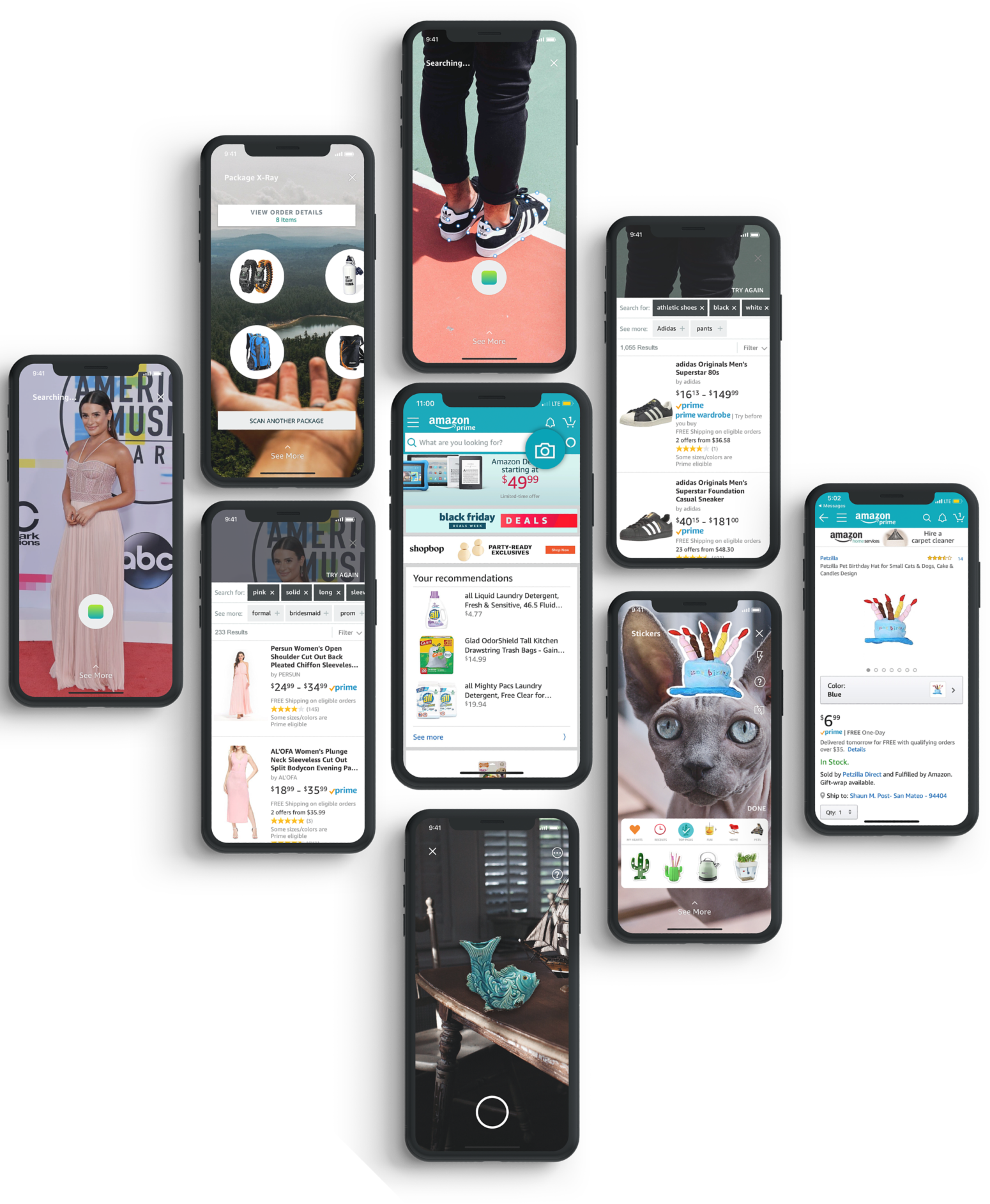

I spent two years at Amazon working on the biggest redesign of Camera launched in Amazon's mobile app that included a multitude of skillsets from a complete overhaul of the mobile experience to building out an end-end information architecture and visual specification. Customers can engage a variety of camera-based activities, to make their shopping experience easier, such as searching with visual search, barcode scanning, seeing inside their Amazon package, adding a gift card to their account, previewing products in augmented reality and more!

Goal: Determine the best user-centered design in separating and distinguishing features (or 'modes'), to improve the customer experience in discovering, capturing, unifying and repeating the varied activities offered in Camera, Android and IOS.

Why: Customers want to see a product then buy it in as few steps as possible. They are also unaware of capabilities such as barcode recognition, package x-ray, upload photo, augmented reality viewing. These capabilities have significantly distinct interaction or post-recognition experience as compared to the default mode (product search), making this project ambigous and challenging to solve.

Role: Lead Designer, UX/UI design, user research, prototyping, product strategy.

Collaborations: I collaborated with 3 other designers, multiple computer vision, iOS and Android engineers, an engineering manager, a PM and received input and feedback on my designs from Amazon Shopping and Mobile App teams. The other designers designed some individual modes while I oversaw the entire feature set and UI/UX.

Process: I oversaw the design of 'modes' alongside my manager, leading 3 designers on my team to create a harmonious experience across new and existing features. I collaborated and managed feedback of internal and external design partners, product managers, senior leaders and a large-scale development team through iOS and Android releases.

Outcome: Camera Modality is live in the Amazon app.

USER-CENTERED APPROACH

Content Architecture in Swipe Approach

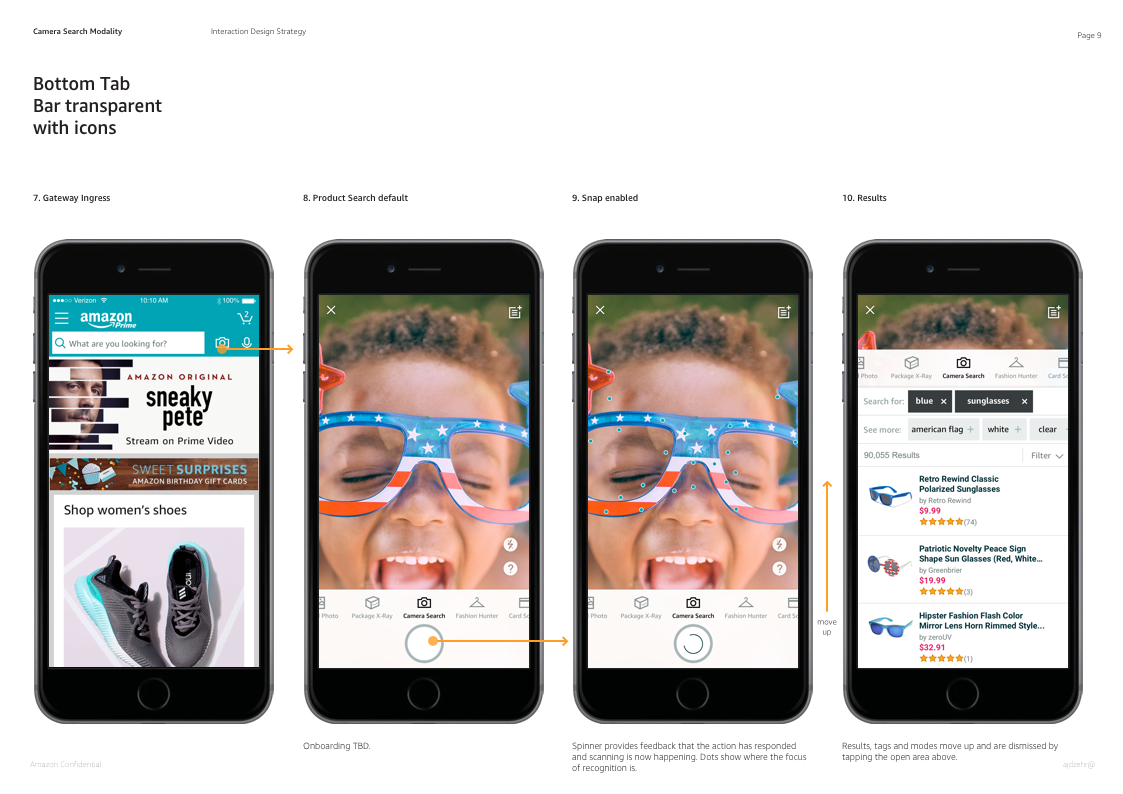

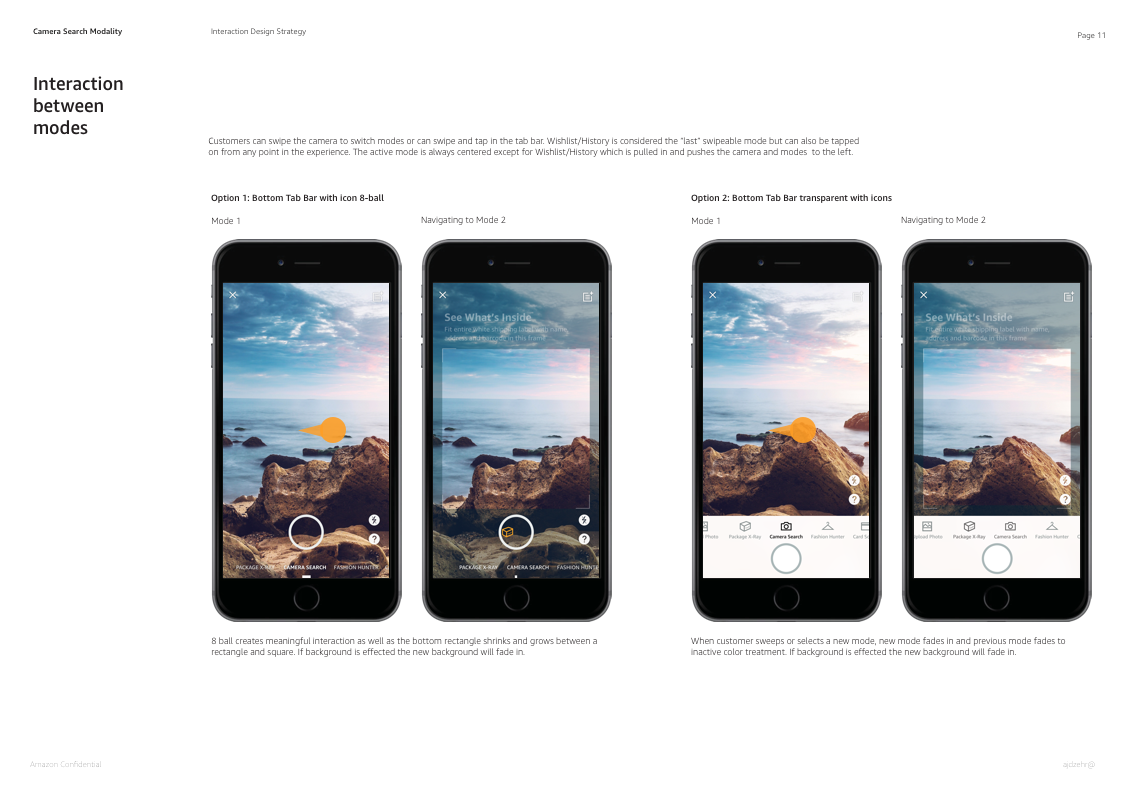

Each mode exists to make customers more successful in achieving a particular activity like finding a product faster, getting better results, exploring the Amazon catalogue visually. The features architectured in modes have significantly distinct interaction and post-recognition experience as compared to the default mode: product search. Because they were highly unrelated, the content architecture (from 'mode' title, to results display, to filtering, to browsing) had to be simple enough for customers to quickly understand each, especially when deeplinking from outside of the camera, or from ad campaigns. Customers easily understood how to swipe between options and how the titles related to the camera activity.

Initial Approach Launched

Launched across all locales Aug 2017, the 'modes' Sheet shows the various Camera features customers can engage with including Product Search, Package X-Ray, Barcode Scanner, SmileCode Scanner, AR View and Stickers, to help customers see all their options and get their choice out of the way. This was our first launched solution, in order to support a growing number of 'modes'. I focused on helping customers maintain where they are in the experience and have an easy to change 'modes,' without interfering with the other CTAs being displayed.

Prime Day Promotion

While our redesign improved discoverability within the camera, we are still challenged to improve discoverability outside of the Camera. I often deliver a wide range of interaction and visual work, used for promotion of Camera and its features. For Prime Day 2018, we offered a $5 coupon to customers who tried using Camera.

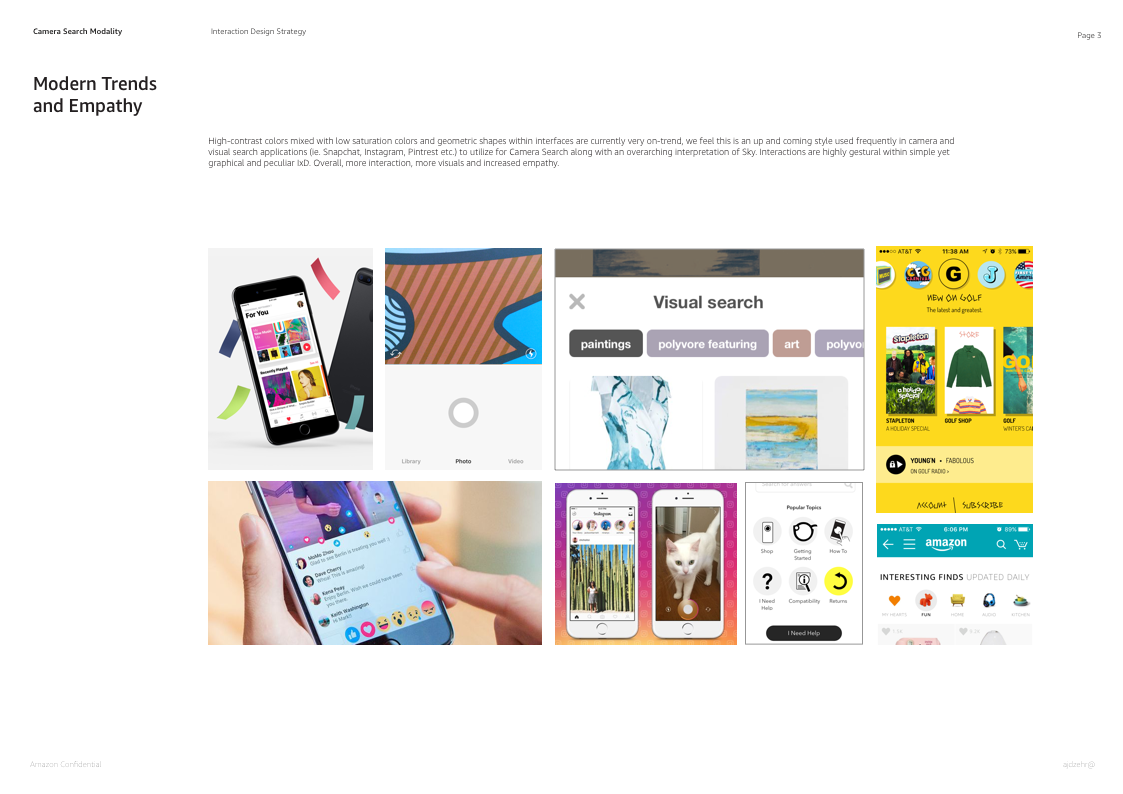

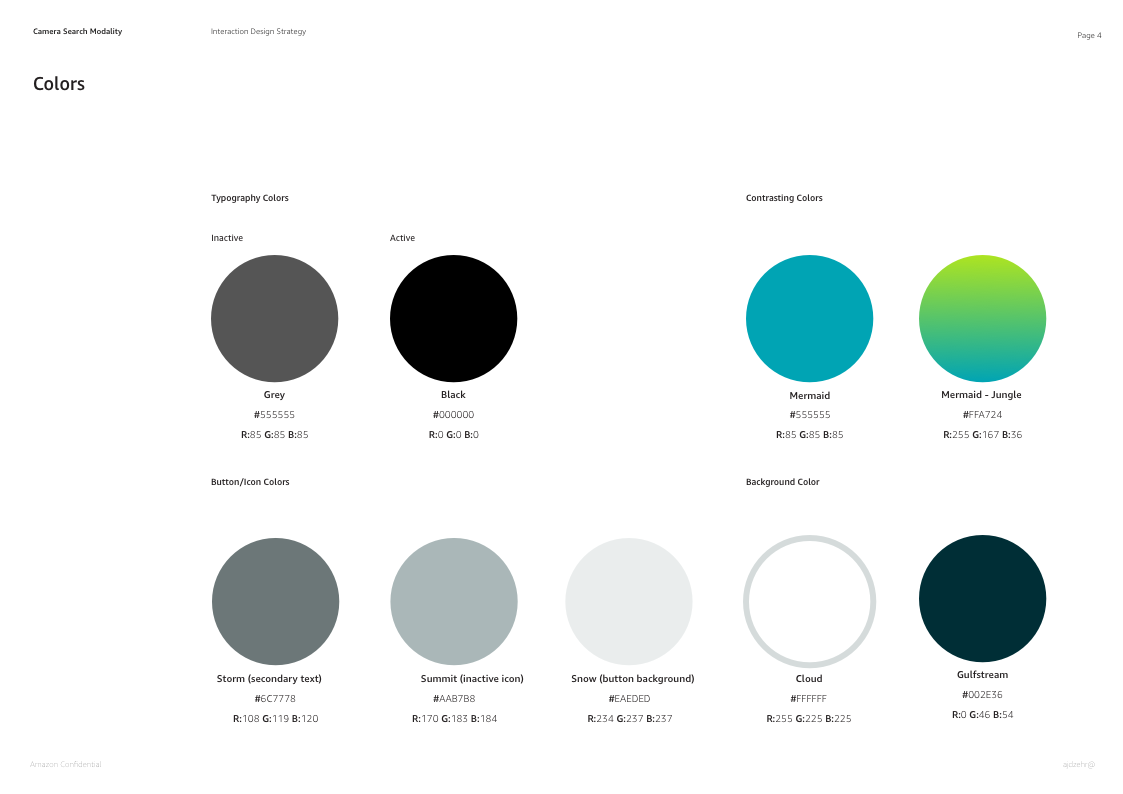

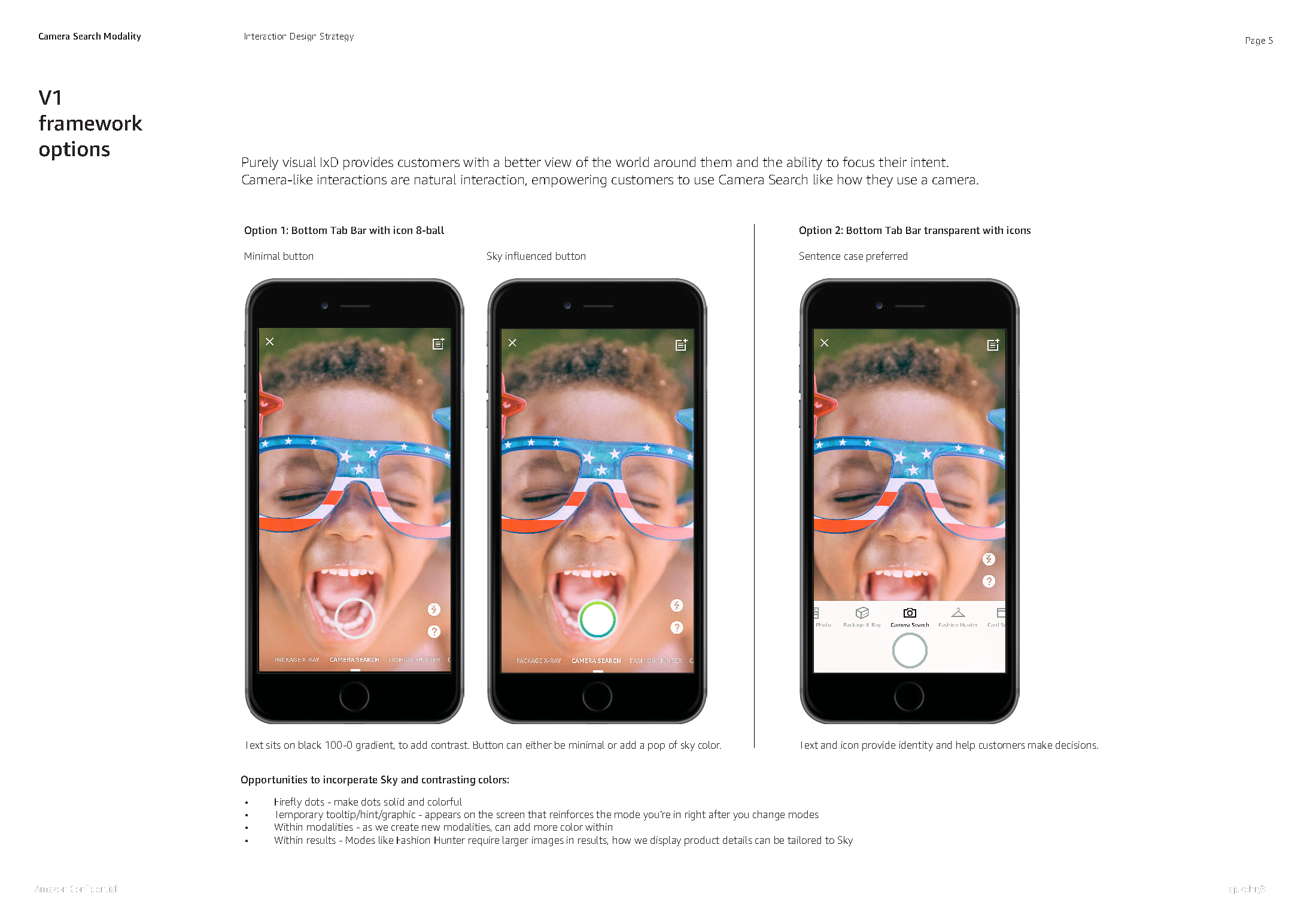

Color Exploration and IA Specification

I conducted extensive visual competitive audits and color theory studies, to develop the visual styleguide and UI elements. I presented at various stages throughout my process to the multidisciplinary team, and core Amazon design team to align my vision with Amazon's guidelines, type face and type ramp when developing my vision from wireframes to visual guidelines.

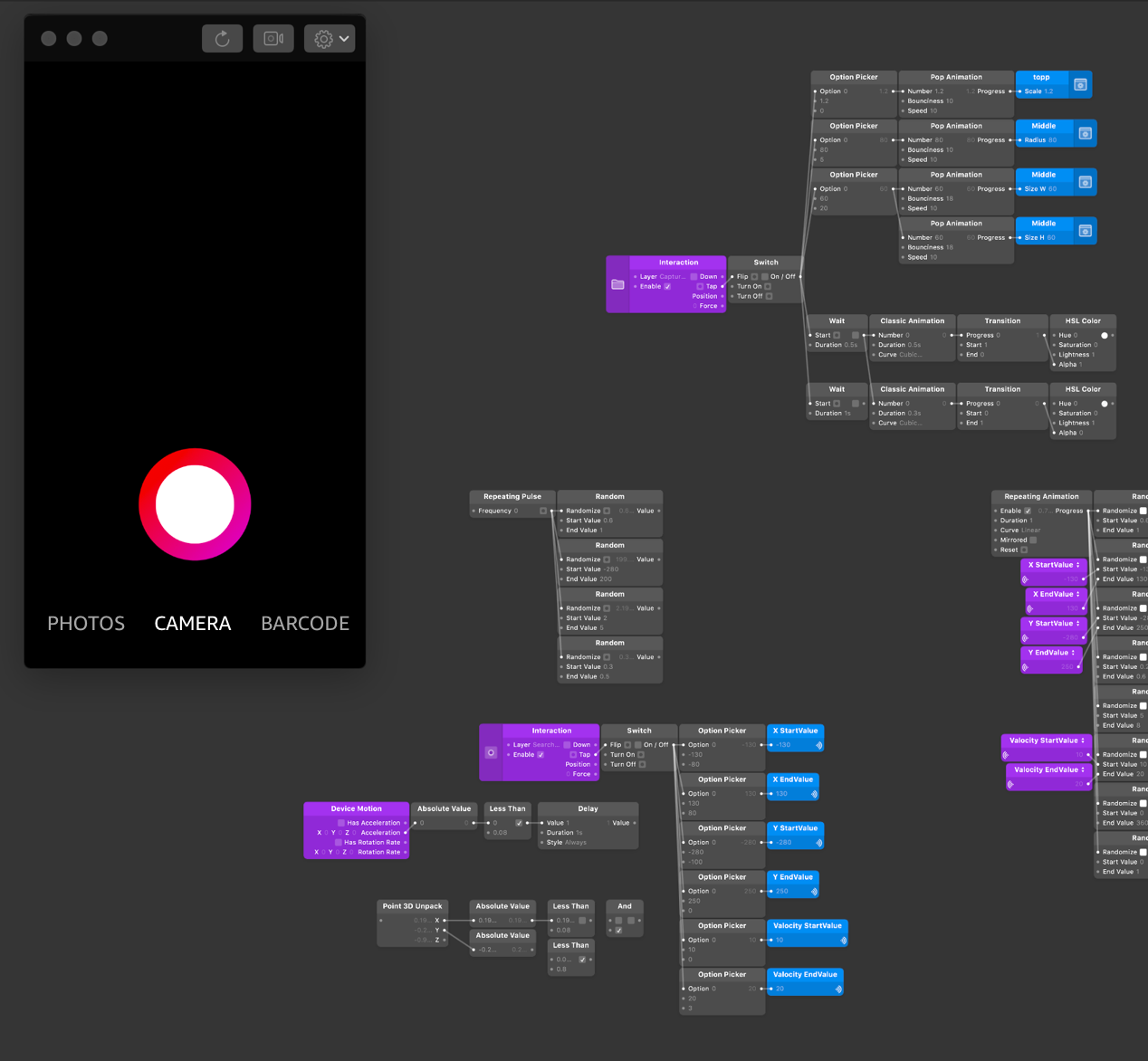

Prototyping & Animating Gradient Capture

I first prototyped the camera button in Origami, to quickly get a feel for its position on the screen and interaction. I then built the UI elements around it, developing an end-end prototype for usability, on a live camera.

Usability with Power and First Time Customers

I prepared and conducted user studies with my product partner, using the Origami prototypes I had created to test interaction and navigational elements.

Impact of Implimentation

The impact was that our team solved multiple complex problems on these releases including improving image query framing, unexpected results and awareness of capabilities and overall raised the bar in visual and interaction design. Our findings gave our product a higher retention rate of about 30% initially and growing. As we continue to improve the experience and ingress for this feature we are constantly learning more about our customer and what is truly important to them. Giving the customer more contextual ways of entering visual search has seemed to be more positive than a funnel through our initial gateway.

Selected Works

Instagram Shopping Try On #Software #ARProduct Design

Facebook Spark AR Commerce #SpeakingProduct Design

Amazon StyleSnap #Software #VisualSearchUpload photos or screenshots to find visually similar styles on Amazon #VisualSearch #Mobile

Amazon Camera Modality #Software #ARBiggest redesign of Visual Search technology across Amazon #Mobile #AR #Camera

Amazon Package X-Ray #Software #ARSee which of your packages holds which gift, without opening them #Mobile #AR #Camera

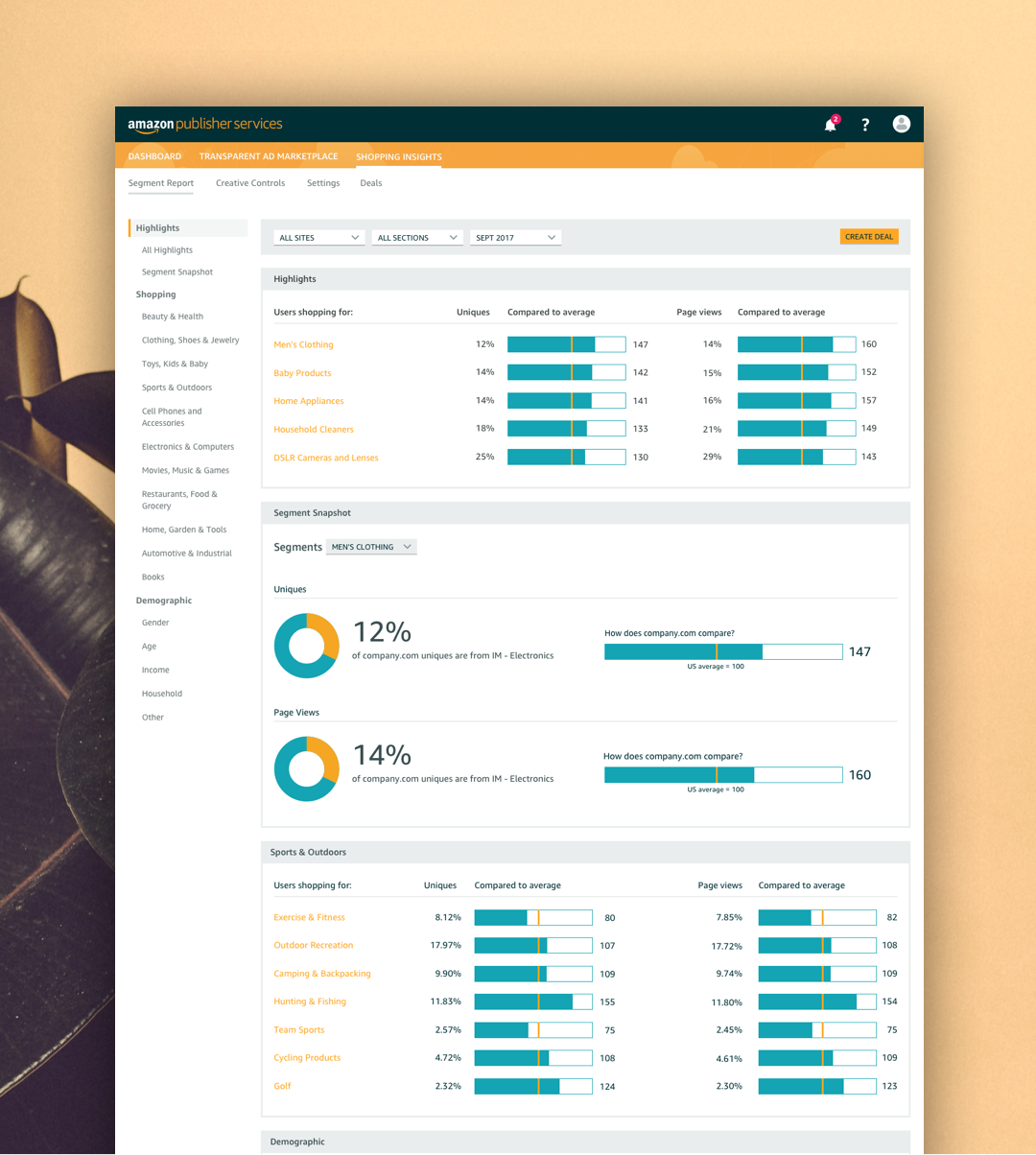

Amazon Publisher Services #Desktop #CloudNew suite of cloud services, built to monetize and grow your media business #Desktop #Advertising

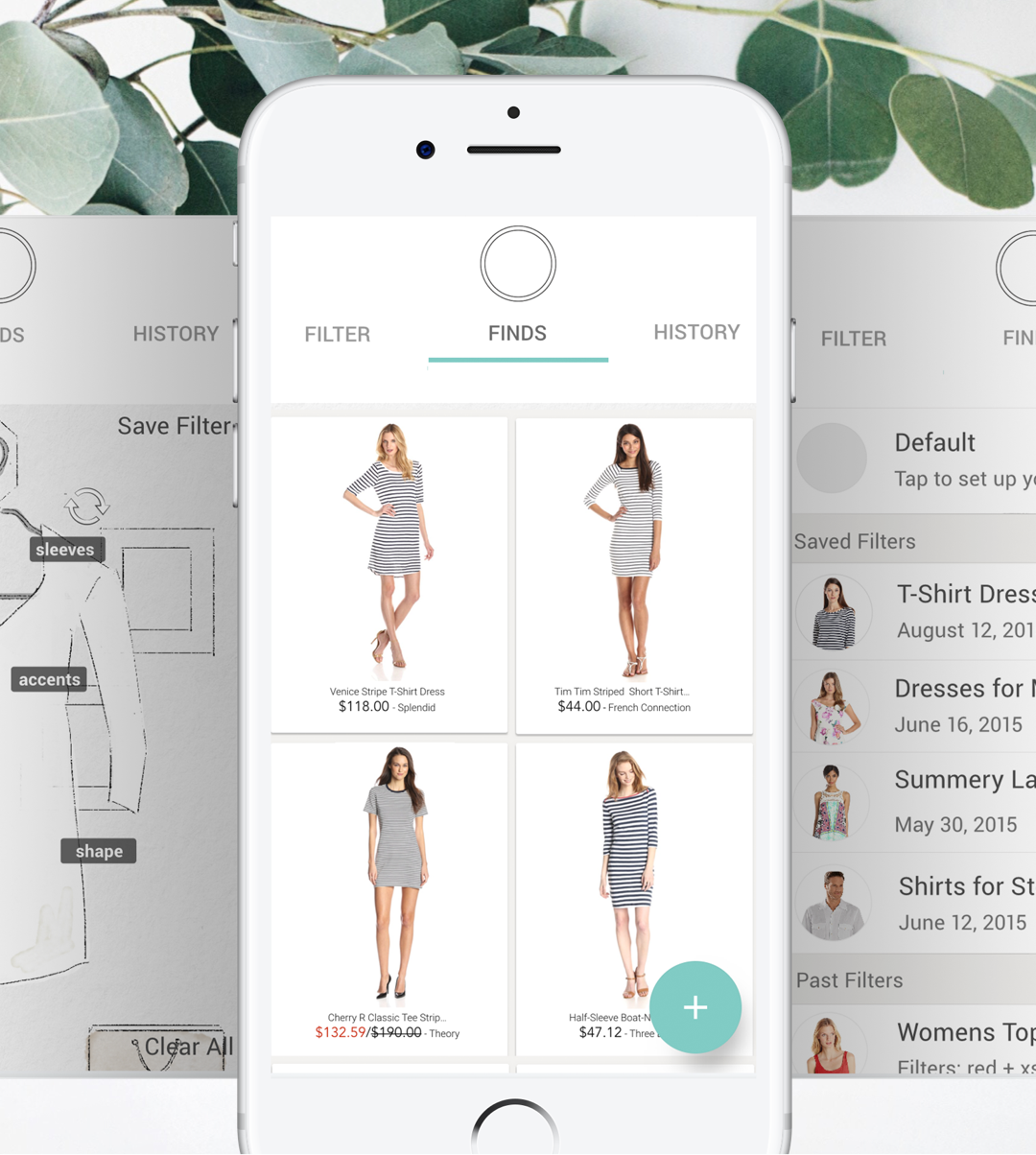

Amazon Visual Fashion Finder #Software #AIFilter the most important aspects when shopping for garments #Mobile #Internship #Fashion #AI

Photography #ArtDirection #PrintCollection of photographed International Editorials #Print #Digital #Identity #Photography

© 2025 Andrea Alam